Open source · Self-hosted · Free forever

Run AI tools on your own infrastructure —

across all your GPUs.

Use pre-built, or ComfyUI-based, AI tools for generation and training — running in parallel across every GPU you own, with optional burst to your trusted private cloud.

Trusted by teams at

The Problem

AI works in demos. Breaks in production.

Teams experimenting with AI quickly hit limits:

Tools aren't usable by teams

Workflows live with one person. Others can't use them.

Compute is underutilized

Multiple GPUs exist, but only one is used at a time.

Scaling is painful

Cloud requires setup, rewrites, and ongoing management.

Result: promising experiments don't translate into real production output.

What You Get

Ready-to-use AI tools

— running on your full compute.

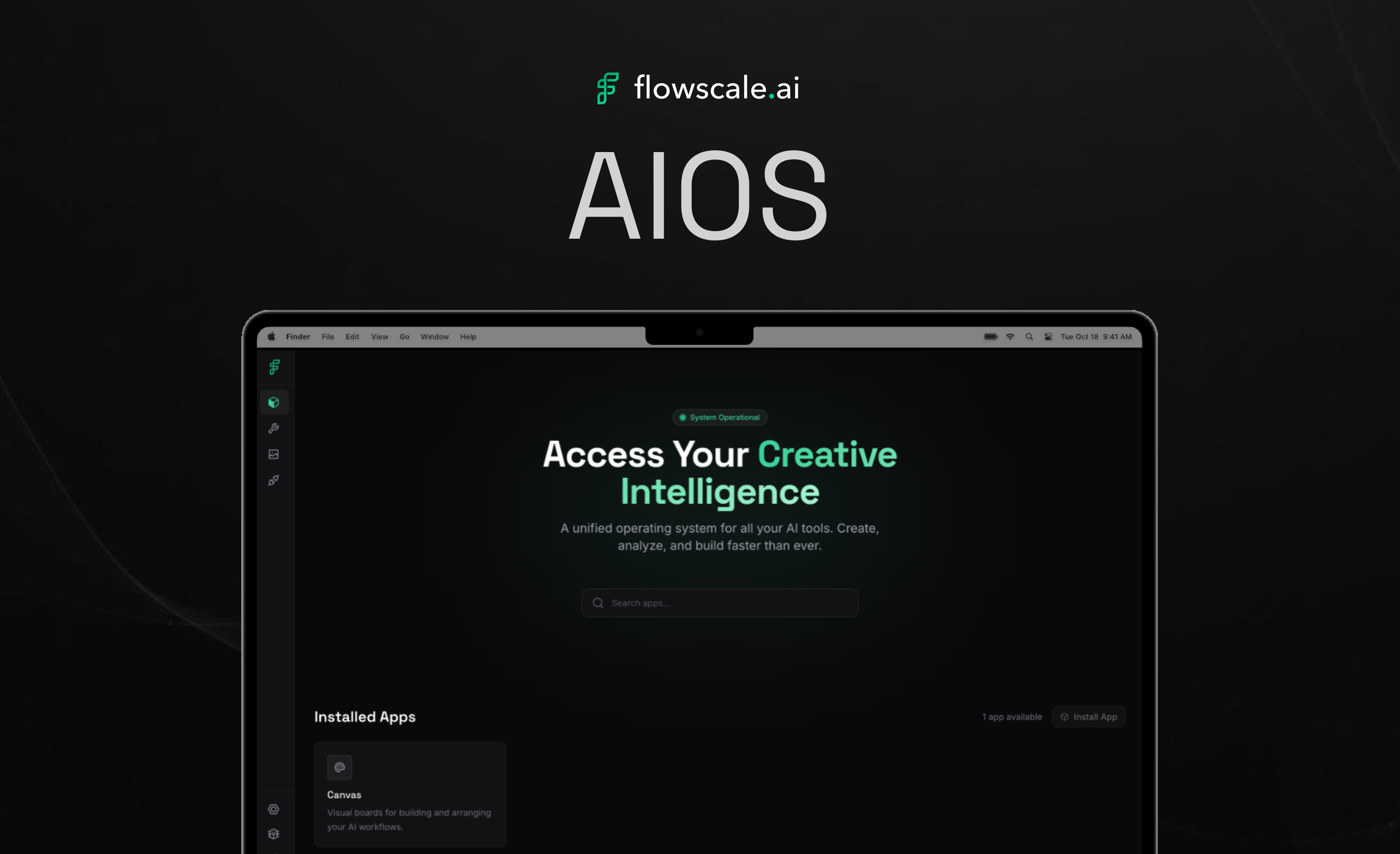

AIOS combines two things:

Build & Run your own AI Tools

Use pre-built production-ready interfaces for generation, training, and editing. OR, build and run ComfyUI workflows as sharable AI tools.

Compute pushed to max

Automatic parallel orchestration across every connected GPU. Zero idle hardware overhead.

Core Value

Your hardware, fully utilized.

AIOS makes that obvious.

What AIOS Does

Start with parallel compute.

Unlock everything else.

Run tools across all your GPUs

AIOS detects every GPU and runs jobs in parallel — one job per GPU.

Burst to your private cloud

Run additional jobs on your own cloud GPUs through Modal.com. Same tools. Same interface.

Make tools usable by your team

Turn workflows into simple tools. Artists use UI. Developers use APIs.

How It Works

Tools + compute.

One system.

Pick a tool (generation, training)

Run multiple jobs

Each GPU handles one job

Everything runs in parallel

Optional: run additional jobs on your private Modal.com GPUs.

Get Started

From download to

output in minutes.

Download AIOS

Takes minutes to install.

Open a tool

Access built-in tools for generation and training.

Run jobs across your GPUs

Easily submit multiple tasks manually or via API.

Scale with your private cloud

Connect your Modal.com account to burst seamlessly to your own cloud GPUs without changing tools.

Who It's For

For teams running AI

beyond experiments.

Solo creators

Run tools faster using all your GPUs.

Technical artists / Pipeline TDs

Stop managing workflows and compute manually.

Studios / creative teams

Run AI tools across your infrastructure — with full control.

Why FlowScale

AIOS is the missing layer between

AI tools and compute.

FlowScale sits between experimental AI workflows and production pipelines — converting complex node graphs into reliable tools that creative teams can use safely across their studio infrastructure.

All features.

No limits. Self-hosted.

FlowScale is built on open models and open systems, giving studios full control over how AI operates inside their production pipeline.

Studios can:

Pricing

Free for individuals.

Scale to Production.

Community

Completely free and open source. For individuals and teams experimenting with AI workflows.

Enterprise / Studio

For studios deploying AI infrastructure at scale. Paid tier tailored to your studio's needs.

Run AI on your own

infrastructure.

Turn your workstation into a parallel AI engine.